True story.

It is a sunny November Tuesday around 12:30p. I finished a meeting with one of my AU advisees. We chatted about how he could go about scheduling for the next semester while he was waitlisted for the majority of his classes.

I look ahead at the rest of my to-do.txt file in Notepad to see what else needs to be finished before class tomorrow. Tomorrow is a hands-on lab day in Cryptography; however, instead of having the cryptography students solve CTF challenges, I’m going to see how well they perform at creating CTF challenges. To set this up, I’m going to create a brand-new CTFd instance in my Linode Cloud and I’ll give them permission to create the challenges. Maybe I’ll put in Texas, Georgia, New Jersey? How about India or Japan? … on second thought, I better not. I wouldn’t want my cybersecurity activity to generate another alert with the SOC. I’ll keep it stateside.

I open my Linode account. Linode is currently my go-to cloud provider to quickly deploy Linux machines in the cloud at a low cost. I am welcomed with 2 open abuse tickets. In the life within cybersecurity, whether it is my current role as an assistant professor of cybersecurity, or my previous role as a cybersecurity engineer/exercise developer, I’ve often thought about that Disney movie Hercules. Remember the fight scene with the Hydra dragon? Hercules solved one challenge (cut one head off); however, many more challenges/heads appeared. Is this going to be the case, yet again? If so, let’s get to it. This won’t solve itself. 🙂

At first I figured it was Linode letting me know that my machines are being abused; however, I’m more than aware of that. I deploy Linux machines in the cloud constantly for my cybersecurity students to help reiterate the topics we learn in class. I want to ensure my students not only know the terms and definitions to excel at phone interviews and in-person interviews, but more importantly know how to actually do the hands-on work at the keyboard.

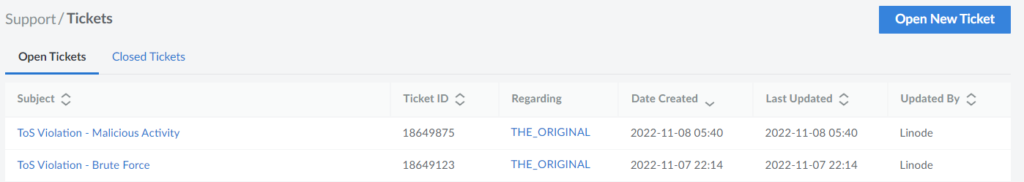

Anyways, I open the ‘click here’ hyperlink and see 2 ToS (Terms of Service) violations.

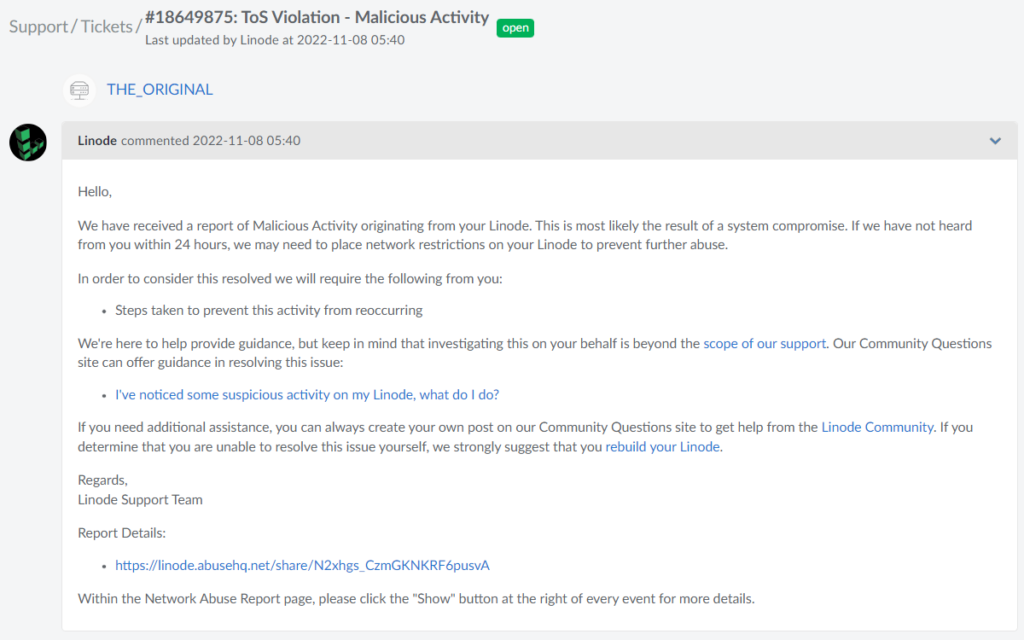

I click the ‘ToS Violation – Malicious Activity’ ticket and am informed that Linode believes my device has malicious activity on it. I am not surprised that Linode believes this is true. I probably have ~40 students accessing one of my Linode machines this week for yet another hands-on real-world weekly assignment. This assignment includes students creating their own SSH key pair, discovering what port SSH is running on (it was not TCP/22), uploading their SSH public key to the SSH server, and verifying they are able to access the remote machine via the SSH key pair (instead of username:password authentication). I am informed that if this activity does not cease, Linode may interrupt the network activity. I wouldn’t want that. My students need access to my Linode machines.

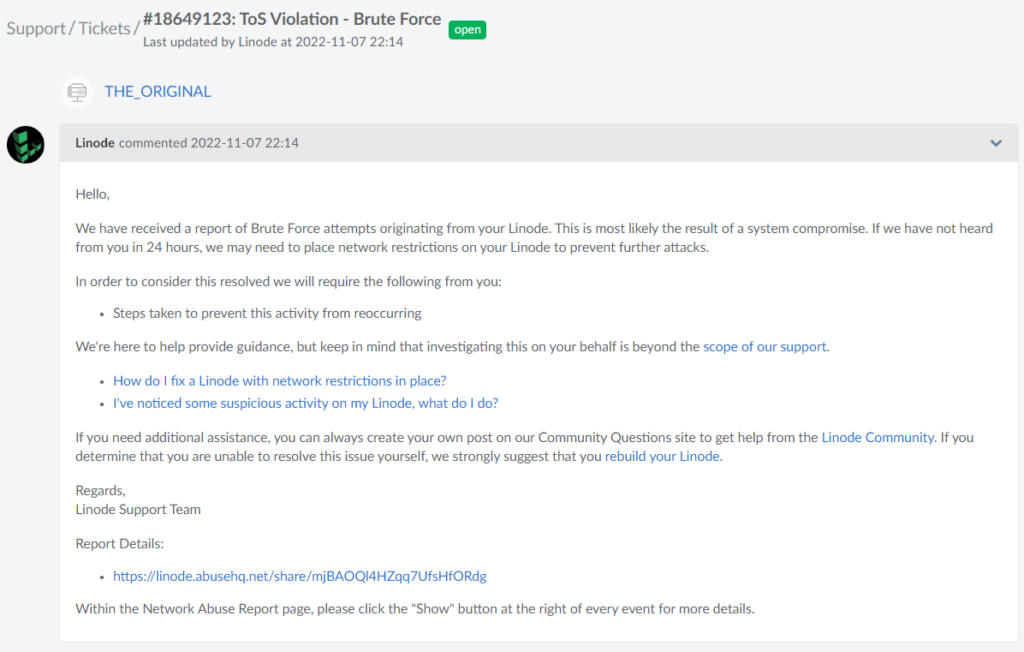

I open up the second ticket that discusses ‘Brute Force’ activity and see similar advice. At this point, I realize that the Linode tickets are against my ‘THE_ORIGINAL’ machine. This is not the machine the students are doing their assignment on! The students are doing their assignment against my ‘cyb210-lab7’ machine. One machine is in New Jersey while the other is in Texas. Is Linode reporting the incorrect machine?

I am more curious than concerned. Did one of my students gain access to this machine? If so, are they performing authorized or unauthorized activity? Which student is it? Have I not given them enough realistic and difficult CTF challenges and weekly hands-on activities? Do they still hunger for more challenges and are using their Professor’s machines as targets? Before I question if I failed to teach them ethical cybersecurity activities and the fact that we are on the same team, I want to prove to Linode that these tickets are false positives and there is nothing to worry about. I don’t want an incorrect Linode ticket hindering my students’ experiences and ability to best prepare for cyber warfare they are preparing for.

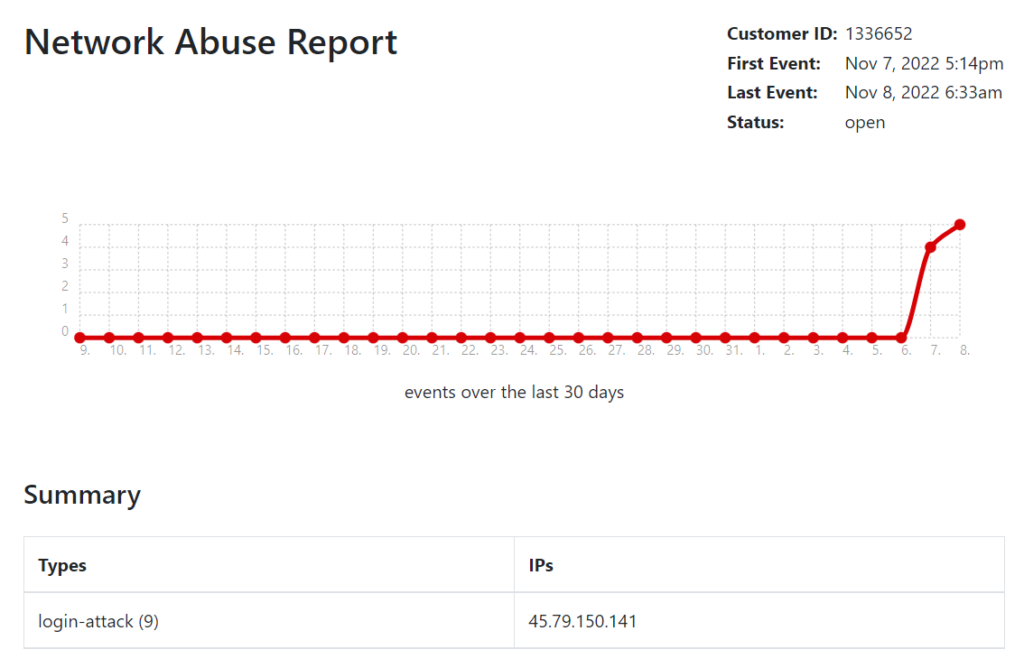

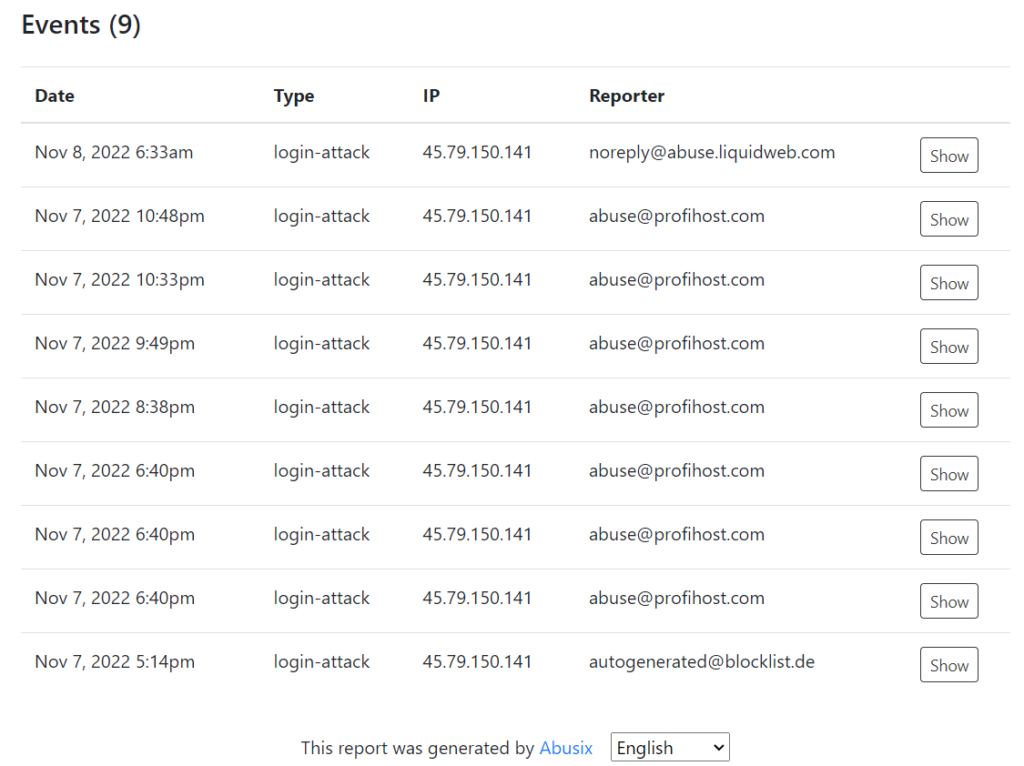

I click on the ‘Report Details’ link and see nine events about my machine being reported over the last 24 hours. These events are coming from three different reporters that are claiming my machine is attacking them! I’d like to think that they have the wrong IP address; however, since three different reporters claim my machine is at fault, I realize something must be happening.

Finally, I realize I should probably check on my ‘THE_ORIGINAL’ Linode. This Linode was the very first Linode I ever deployed. I’ve used it for a couple years since first arriving at AU. I figured $5/month would be well-worth the ability to have a Linux machine in New Jersey to demonstrate with while we are in South Carolina. I’ve become attached to this machine as if it were my own pet. This machine has been “man’s best friend” for me during my teaching activities. Anytime I needed to either host a file or demonstrate a cybersecurity activity, I could always rely on 45.79.150.141 to be there for me. Something about those numbers just seem to roll of the tongue or maybe I’ve simply gained muscle memory from constant repetition of repeating those wonderful four octets.

Regardless, my work has outgrown the use of just one Ubuntu Server (the Operating System ‘THE_ORIGINAL’ is running on). My ‘THE_ORIGINAL’ hosts files and has a few other use cases; however, the disk space is nearly full and I just want this machine to enjoy its steady role of helping my CTF students with the files and resources they need. I could never get rid of 45.79.150.141 in the same manner so many people become attached to their first cellphone number.

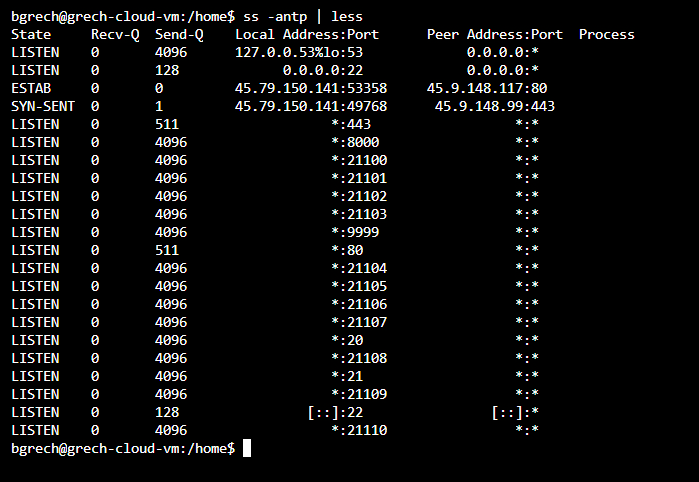

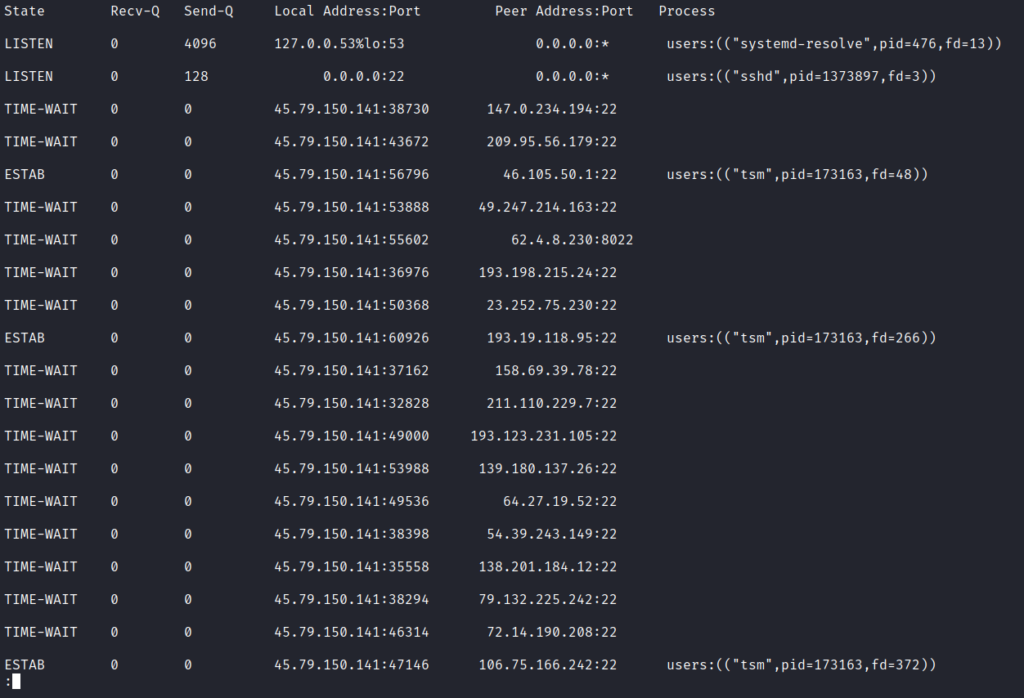

I login to ‘THE_ORIGINAL’ from the Linode LISH Console and want to see what network socket statistics are present on this machine. Is my favorite machine (don’t tell the other Linodes) really brute forcing other systems? I run the command ‘ss -antp | less’ to view some basic network socket statistics and am surprised to see my Linode reaching out to TCP/80 on 45.9.148.117 and TCP/443 on 45.9.148.99. Why is this happening? My Linode is supposed to be the server. Machines are supposed to connect to me on my TCP/80, TCP/443, and other ports! This does not appear to be good. Maybe the three reporters are correct about this machine. Have I lost my best server?

I realize I may be here for a while. I spin up my Kali VM and SSH into 45.79.150.141 as I’ve done so many times. I have more freedom and flexibility using an SSH shell from within Kali rather than the Linode LISH Console. For instance, I can run the shortcut ‘Ctrl+r’ to recursively search my bash history, instead of having an annoying refresh of the Linode LISH Console browser window.

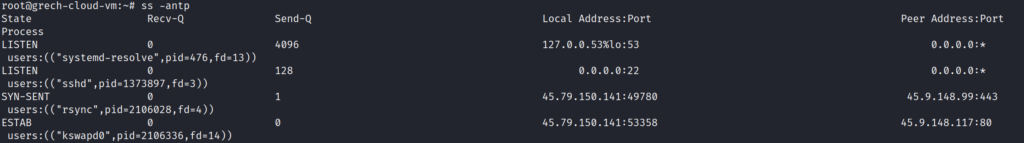

Anyways, I drop into a root shell to try and discover what processes are being used to reach out to those 2 IP addresses. I run the command ‘ss -antp’ except as root I will be able to hopefully see the process(es) being used that is/are reaching out to those 2 strange IP addresses. I see rsync and kswapd0 are the processes being used to communicate with those remote machines. I now realize somebody has been messing around within my machine as I have not set up either of these programs to reach over HTTP (TCP/80) or HTTPS (TCP/443).

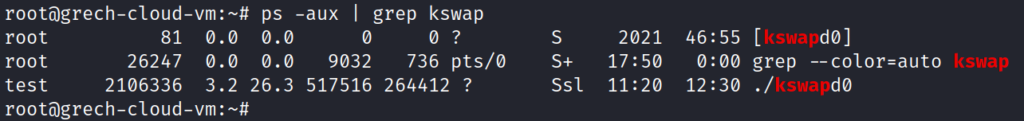

I must figure out more about these processes that are running. Who is running them? Why are they running? I run the command ‘ps -aux | grep kswap’ to see all processes and user details. I see there is a test user running kswapd0! Why does test’s kswapd0 have a different process ID than the originally launched kswapd0 (PID 81)? It clearly did not startup at boot and only began firing off recently. I now see that the user test has launched the process ID of 2106336 that is running some program named kswapd0 that is reaching out to 45.9.148.117 at TCP port 80.

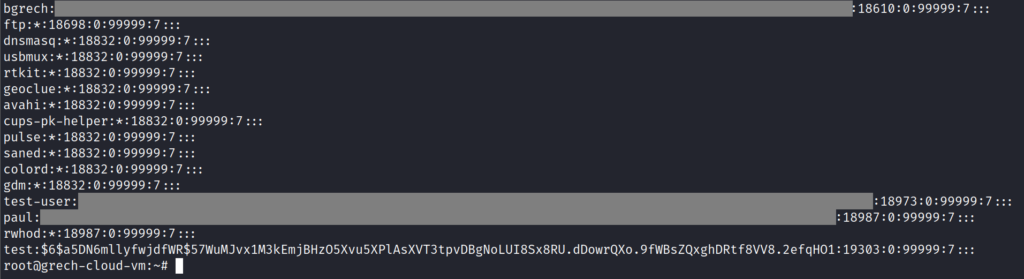

Is the user test really an active user on my machine? To check on this, I open the file /etc/shadow that contains the usernames, hashes of passwords, and other details about users on this Linux machine. I see test user at the bottom of this list! This user is active! Other accounts that are active are bgrech, test-user, and paul (their password hashes have been redacted).

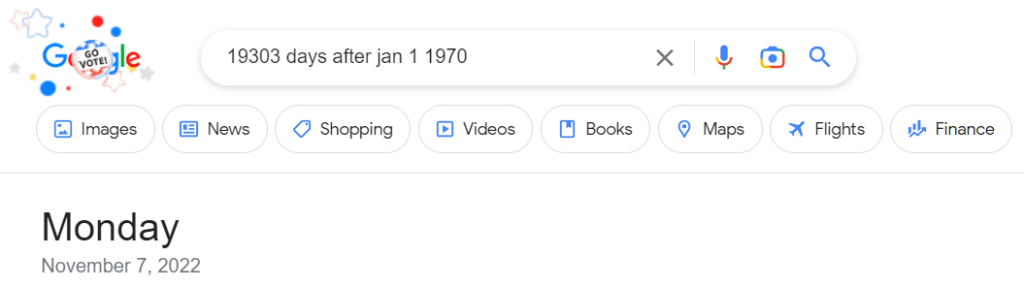

Is this a brand new account or has it been around for a while? Notice the 19303 between the colons on the same line as test? That indicates how many days since January 1, 1970 this password was set/changed. I head over to Google to figure out what day was 19,303 days after January 1, 1970. Google informs me this date was yesterday! This account had its password either set (new account) or changed (older account) yesterday!

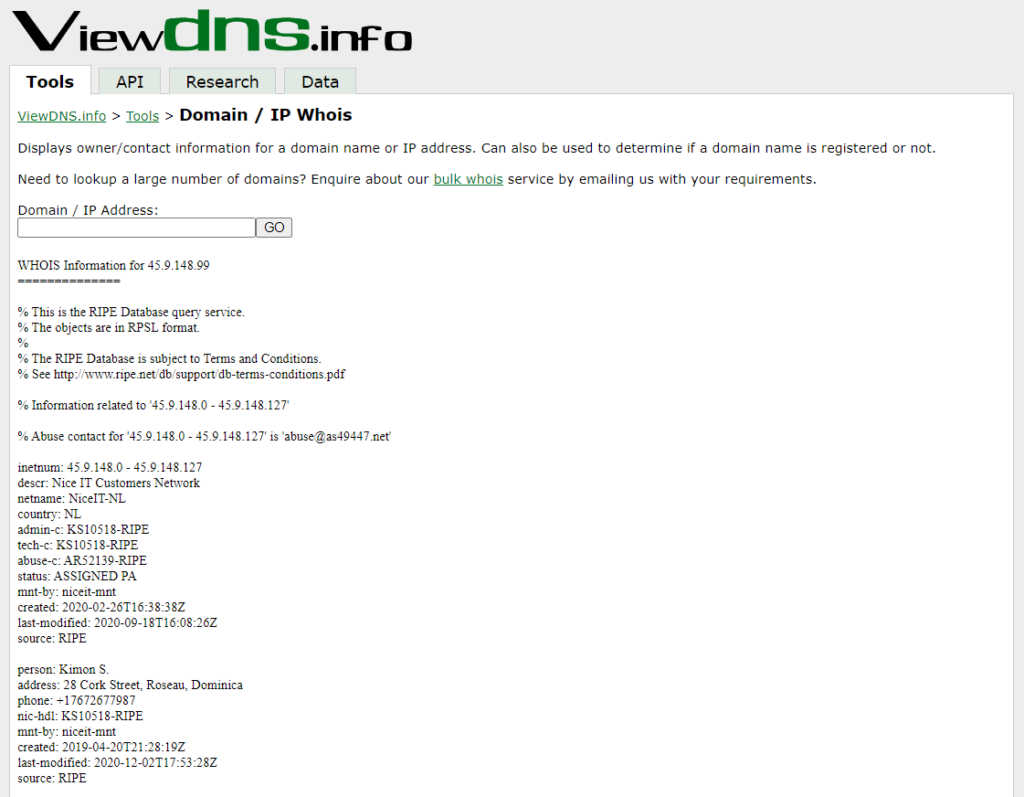

What are these IP addresses anyways? I dive into my favorite online resource for IP address information over at viewdns.info.

A Domain / IP Address lookup informs me that these machines are both hosted by Nice IT Customers Network and have contacts in Netherlands and Dominica. As much as I’d like to dive more into this company and addresses, I must move forward and can save attribution for later, if necessary.

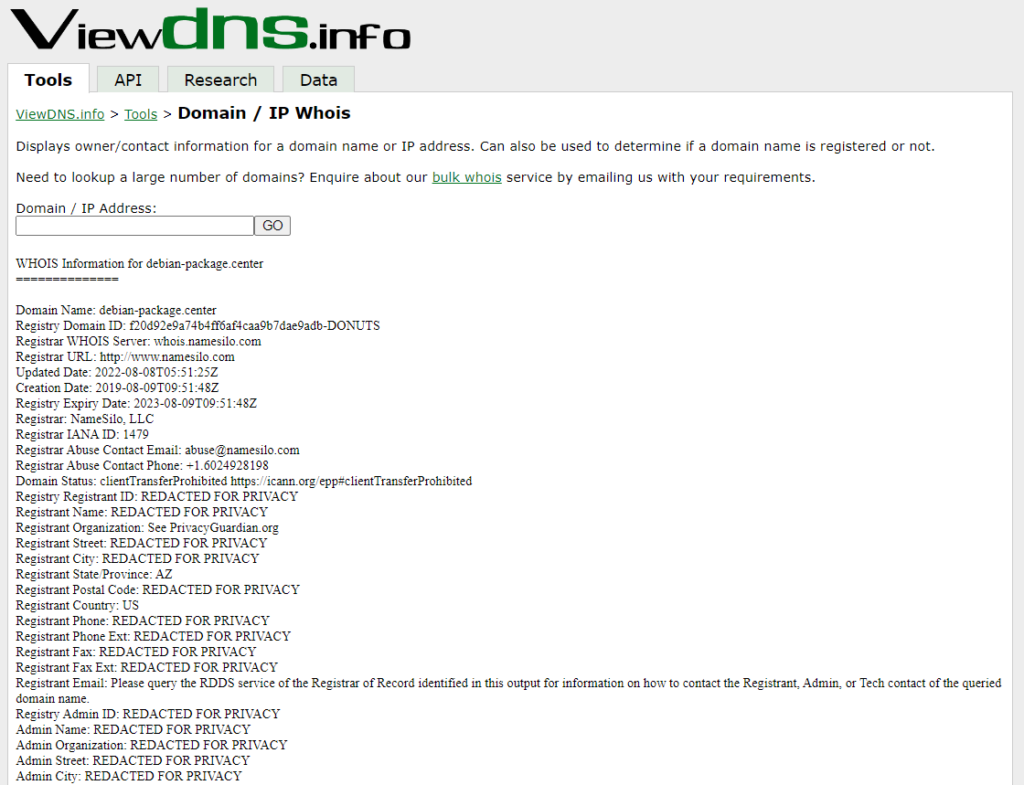

A Reverse IP Lookup search does not show anything for the IP address of 45.9.148.99; however, the IP address of 45.9.148.117 indicates that the domain debian-package.center resolves to this IP address. Have I been mistaken the whole time? Has my Ubuntu Server (which is based on Debian) been simply doing updates or maintenance? I perform a Domain / IP Whois search for this domain and find that most of the registration data is redacted for privacy. This does not seem like something the Debian developers would do; however, I want to see if this domain has been known to be malicious.

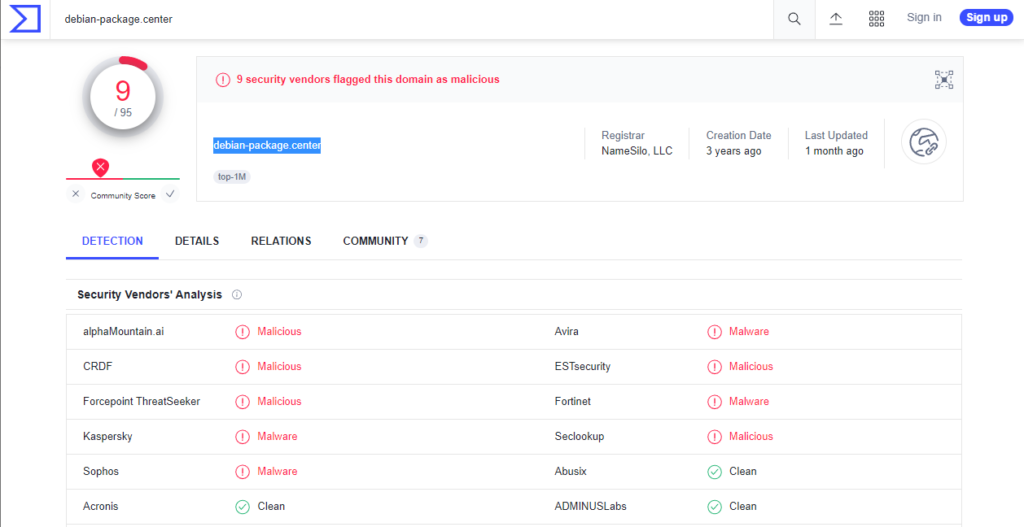

I browse to VirusTotal and do a search for debian-package.center and discover that nine security vendors flagged the debian-package.center domain as malicious. With the WHOIS and VirusTotal results, I have high-confidence that this domain is not used for legitimate purposes.

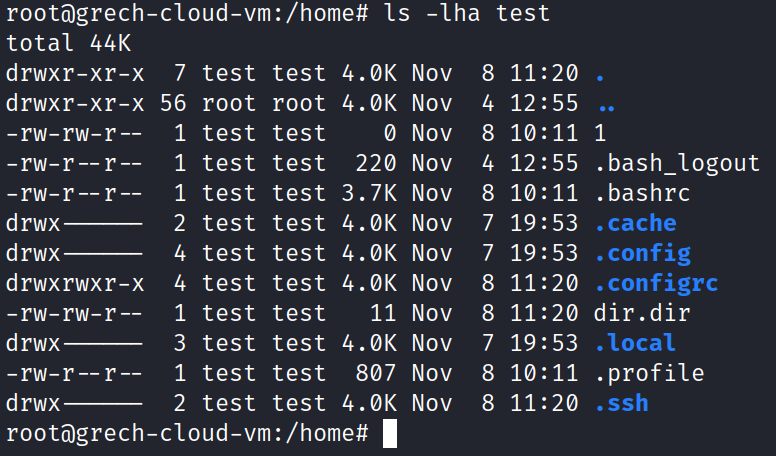

I run the command ‘ls -lha test’ to see if this home directory contains anything of interest. It does! I see dates ranging from Nov 4 12:55 – Nov 8 11:20. It is currently Nov 8 13:08. These are very recent.

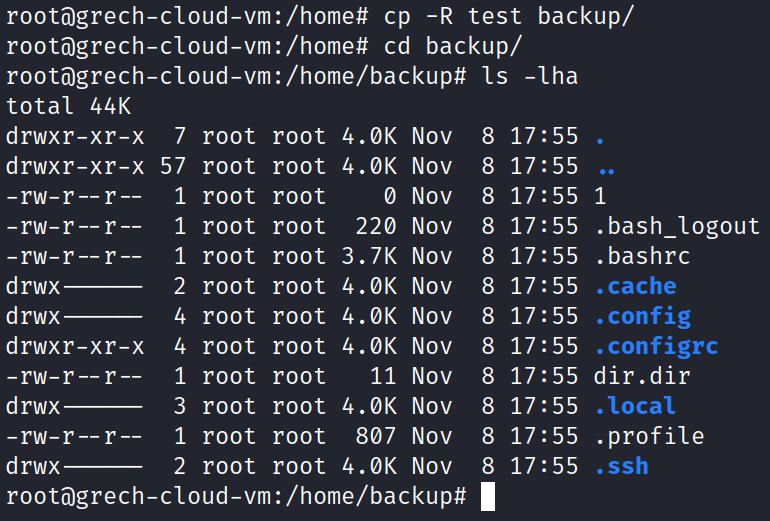

I want to continue to dive into the home directory of the test user; however, I do not want to modify or alter these files. I create a backup directory (that should be less suspicious to a hacker than creating a directory named ‘hacker-backup’, right?) and recursively copy all files to the backup directory for analysis without disturbing the test user. I run the command ‘ls -lha’ to view all files in long list format with human-readable filesize. No bash history is found. I do not recognize certain file names such as 1 or dir.dir that are present. I have not seen those files before over my career.

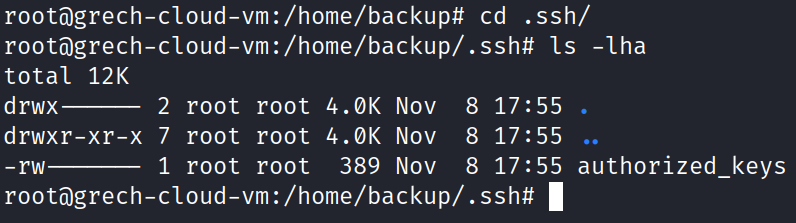

I am interested in discovering if there is anything of interest in the .ssh directory. I move into that directory and discover somebody has created a 389-byte file titled authorized_keys.

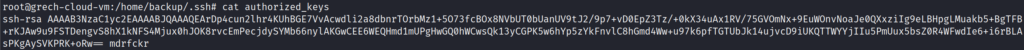

Running the ‘cat authorized_keys’ command, I see that in fact an SSH RSA public key has been added to my system. I also see the inappropriate term at the end of the file (no need to Google what that may mean).

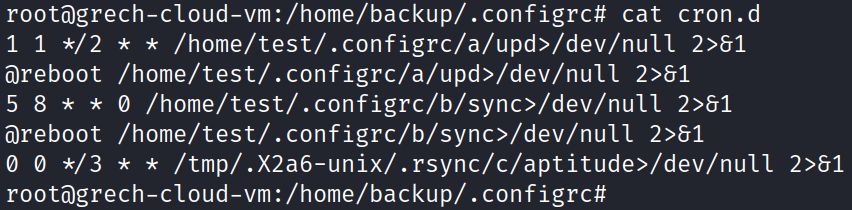

No other user on my machine has the directory .configrc, so why does test user? Within this directory, I see a cron.d file that contains various scripts that are supposed to execute at either reboot or various times and send any standard error or output to /dev/null. For instance, the /home/test/.configrc/a/upd command appears to be scheduled to execute the first minute of the first hour each day that ends in an even number (e.g., 2nd, 4th, … 30th). Feel free to double-check my work and if you read the cron job in the same manner. 🙂

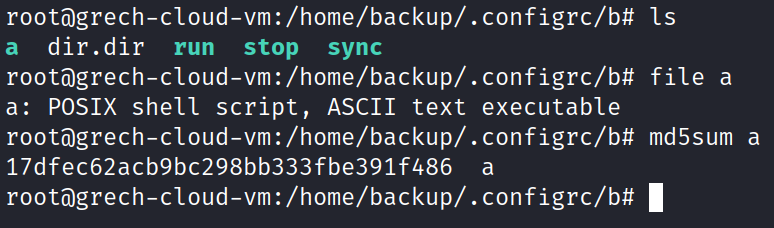

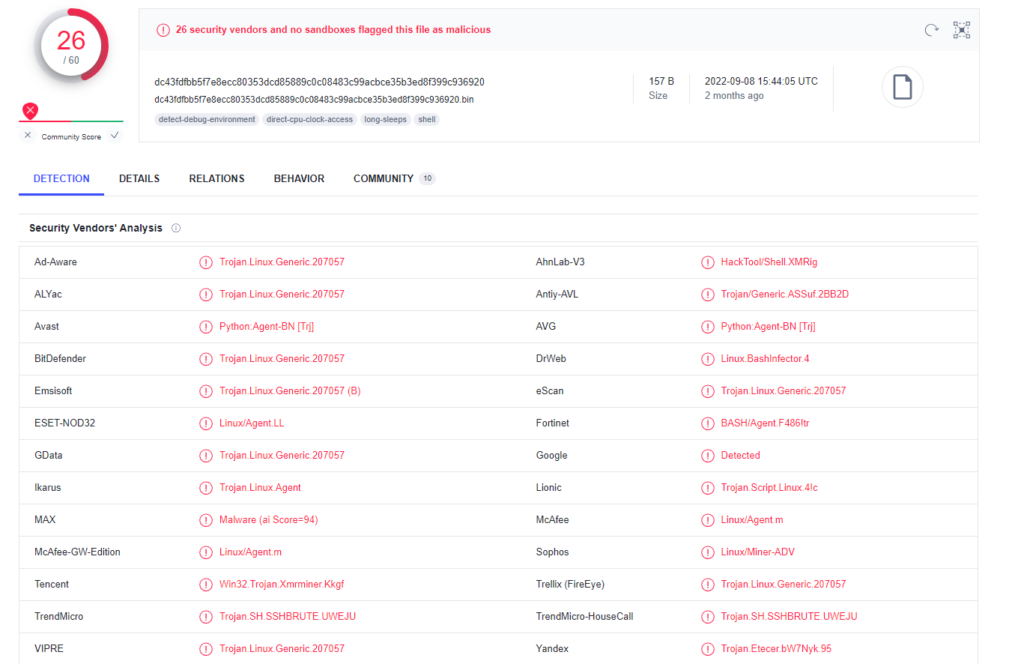

What are these programs that are set to execute? I navigate to the .configrc/b directory and not only see the sync file referenced in the cron.d file before, but also a few other executable files. I run the ‘file a’ command to see what type of file the file a is. I’m informed that it is a shell script. I hash the a file as well and upload the hash into VirusTotal. 26 security vendors flag this hash as malicious!

How did test user get in to our system? From the home directory, it appears that this account has been around since at least Nov 4, unless the attacker modified the timestamps with a command such as ‘touch’.

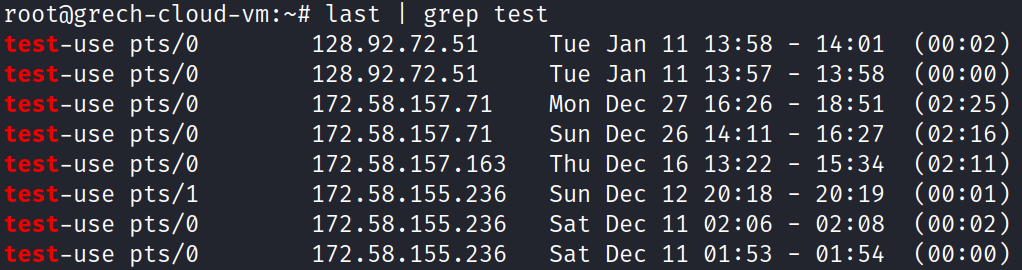

I run the command ‘last | grep test’ to search for any successful logins from the user test. No results are returned as only test-user successful logins are shown.

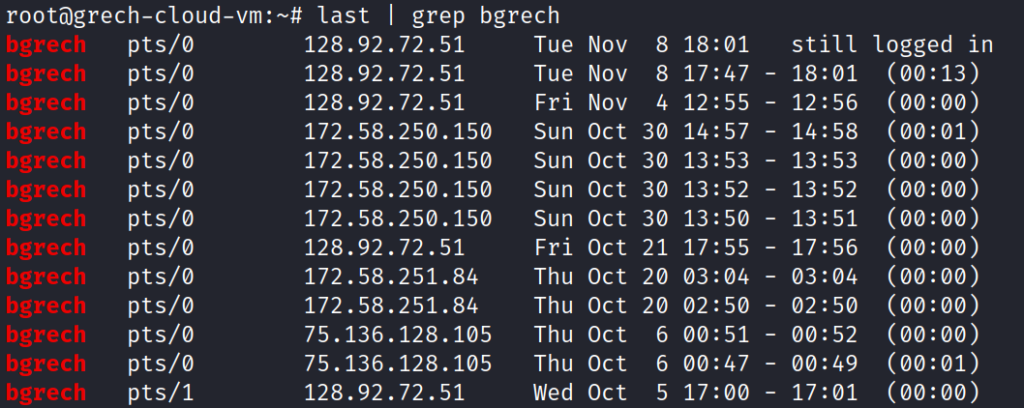

To verify this command works as intended, I run the command ‘last | grep bgrech’ and see my own most recent logins. Wait, why do I see Nov 4 12:55 login? Isn’t that the same time of the earliest timestamp in test user’s home directory?

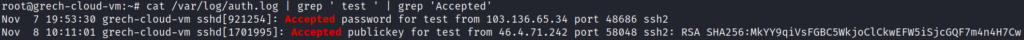

I want to continue diving into the logs to see if test was able to SSH into my system. The user already has its SSH public key in its home directory. I want to search the auth.log file and find if my machine accepted a connection from a user named test (and not test-user). I run the command “cat /var/log/auth.log | grep ‘ test ‘ | grep ‘Accepted'”. The results show that the user test gained SSH access with a password on Nov 7 19:53:30 and was able to SSH into the machine again (with their RSA SSH Keypair) at Nov 8 10:11:01. We have found where our system appears to have been initially compromised! The IP address of 103.136.65.34 logged into my machine with the username test and a password that was accepted. Is this IP address the IP address of a hacker, or somebody else that has also been compromised? What about the second IP address of 46.4.71.242? I’ll leave that up to you to determine.

I run the command ‘ss -antp | less’ again to view some basic network socket statistics. I see my machine is now attempting (or even established) multiple SSH sessions with machines all across the world! My machine appears to be attempting to gain access to IP addresses such as 147.0.234.194, 209.95.56.179, 49.247.214.163, and so many others. My machine may have also gained remote access to other machines such as 46.105.50.1, 193.19.118.95, and 106.75.166.242! It appears that not only has my machine been compromised, but it is also attempting to infect so many other machines across the globe!

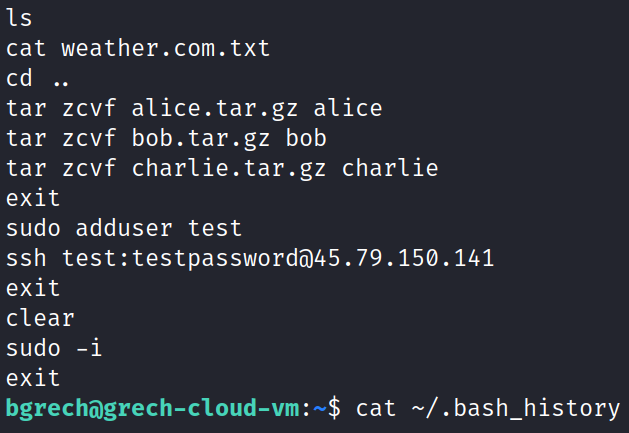

How did this test user appear? Where did it come from? Why does Nov 4 12:55 appear as my (bgrech) login and the first timestamp in test user’s home directory? Am I somehow to blame? Without dismissing my own actions on this machine, I exit root and return back to the user bgrech. I search through my own bash history with the command ‘cat ~/.bash_history’ and see my own bash log shows I added the user test!

The blame all comes back to me (not Linode, not the students, not a zero-day exploit, etc.) I remember a few days ago a student submitted their assignment and I noticed they claimed they were able to use a one-liner to have their username and password in the ssh-copy-id syntax. This one-liner the student utilized was ‘ssh-copy-id -i /home/kali/.ssh/id_rsa.pub -p 2222 <username>:<password>@45.56.65.143’ (this against a separate machine, in Texas). I had not seen this used before. I’m more familiar with people trying to skirt by the ssh command restrictions of forcing manual password input by using sshpass to enter a username and password as a one-liner. In my bash history you can see I wanted to explore if this was an undocumented feature of SSH. It did not work for me with the username test and the password testpassword.

It turns out I ran the command ‘ssh bgrech@45.79.150.141’ to test this out on my ‘THE_ORIGINAL’ Linode (my most trusted machine) and simply exited out and returned back to grading without removing the embarrassingly weak account and credentials of test:testpassword. After 72 hours, this machine with one fatal error became part of a botnet. A Google search of the term mdrfcker (which we found earlier) shows various articles supporting that my machine was infected with the same crypto-botnet that others have encountered.

At the end of the day, maybe I caught a hacker, maybe I caught a botnet, or maybe I simply just caught myself making a rookie mistake.

As much as I’d love to continue to dive into this, I must eradicate this malware, ensure Linode does not restrict my machines, and I better get back to deploying that CTFd platform for the students to use in the morning.